I built this project after running into a familiar problem: great shows, hard-to-follow dialogue, and subtitles that were missing or just not very good. So I put together a command line tool called Bibble Subs, a Python app that takes a video file, extracts the audio, runs speech to text with faster-whisper, and writes proper subtitle files you can drop straight into a media player.

The goal was practical, not academic. I wanted something I could point at a real episode, choose how much of it to process, and get usable results quickly, whether I was running on CPU or CUDA. Along the way, I added guardrails for file validation, time slicing, model download options, language selection, and multiple subtitle output formats including SRT, VTT, and ASS.

In this post, I will walk through the approach, key parts of the code, and what worked well in practice so you can build your own subtitle pipeline or adapt this one to your workflow.

The Problem

A while ago I wanted to watch television series "When the Boat Comes In". It is set in North East of England. I attach a clip of the start so you can see what you are up against.

Tooling

I didn’t want to build a speech‑recognition system from scratch — I just wanted subtitles that didn’t look like they’d been typed by someone who’d only heard English described to them once, in a pub, in 1973. So I leaned on a few solid tools to do the heavy lifting while I stitched everything together with Python.

Here’s what’s under the hood.

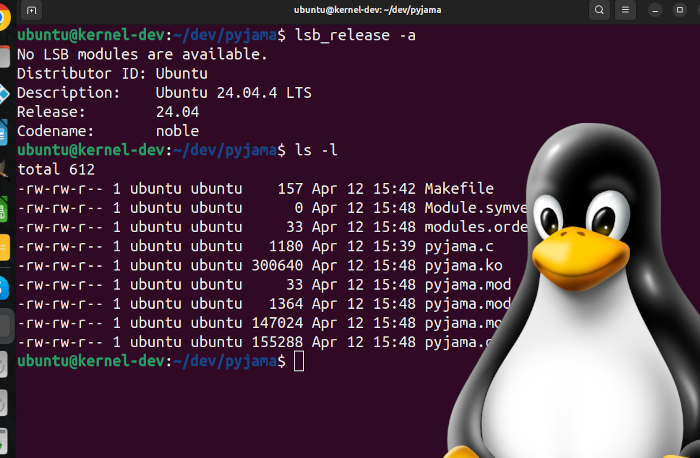

PyAV (FFmpeg under the hood) — the media workhorse

Every video file is a different flavour of chaos. I use PyAV, which sits on top of FFmpeg libraries, to open media files, decode audio streams, and write extracted audio for transcription.

faster‑whisper — Whisper, but without the gym membership

This is the speech‑to‑text engine. It runs on CTranslate2, which means:

- fast on CPU

- very fast on CUDA

PyTorch is also included for GPU device detection and CUDA support. It also gives me word‑level timestamps, which is perfect for building proper subtitle files instead of "vibes‑based timing".

Torch + CUDA — optional turbo mode

If you’ve got a GPU, Bibble Subs will happily use it.

If you don’t, it won’t sulk — it just switches to CPU and carries on.

The goal was flexibility, not “you must own a gaming PC to watch telly”.

External Whisper models — pick your fighter

You can choose from:

- tiny

- base

- small

- medium

- large‑v3

The tool will download the model for you if it’s missing.

Bigger models give better accuracy; smaller ones are faster.

It’s like choosing between instant noodles and a slow‑cooked stew — both work, it just depends how hungry you are.

Python CLI — because a GUI would have slowed me down

The whole thing runs from the command line.

You point it at a video, choose a model, optionally pick a time range, and it spits out subtitles in:

- SRT (classic)

- VTT (web‑friendly)

- ASS (for people who want karaoke effects or just enjoy chaos)

It’s simple, scriptable, and easy to automate.

How It Works

Bibble Subs follows a simple idea: take a video, pull the sound out of it, let a very clever model listen to it, and then turn the results into subtitles that don’t make you question your life choices.

Under the hood, the pipeline looks like this:

1. You point it at a video

Bibble Subs validates the path and checks for a real video track with MediaInfo before doing any heavy work. It can handle most formats supported by your local PyAV/FFmpeg build.

You can also choose:

- where to start

- how long to process

- which model to use

- which subtitle format you want

It’s your show.

2. PyAV extracts and slices audio

This is the “scoop the audio out of the video” step. The app opens the container, finds the audio stream, seeks to the requested start time, and processes frames until it reaches the requested duration.

This keeps transcription fast and avoids feeding Whisper an entire hour when you only needed the first scene.

3. faster‑whisper does the listening

This is where the magic happens.

The model:

- loads the Whisper size you chose

- runs on CUDA if you’ve got it

- falls back to CPU if you don’t

- detects the language (unless you tell it)

- uses VAD to avoid hallucinating dialogue that never existed

- produces word‑level timestamps

The result is a clean, structured transcription with segment timings and text that actually matches what people said.

4. The transcript gets turned into subtitles

Once the text is ready, Bibble Subs converts it into your chosen format:

- SRT — the classic

- VTT — for browsers and modern players

- ASS — for people who enjoy karaoke effects or just want to feel powerful

Each format has its own writer, so the timing stays accurate and the output looks like something a human might have made.

5. You get a subtitle file you can drop straight into a media player

That’s it.

No cloud services, no accounts, no “upload your entire video to our server and hope for the best”.

Just a local tool that does one job well.

Future Improvements

Bibble Subs works well today, but there are a few things I want to polish so it feels less like a “developer tool I built for myself” and more like a “proper grown‑up application”.

1. Smarter output handling

Right now, if you don’t specify an output path, Bibble Subs politely drops your subtitles into:

/tmp/subtitles.srt

Which is fine… unless you forget to move it, reboot your machine, and then wonder why your subtitles have mysteriously evaporated. Future versions will:

- create output files next to the original video

- or inside a user‑specified folder

- or inside a project‑level “subs/” directory

- with automatic naming based on the video file

Basically: fewer disappearing subtitles, more “oh, there it is”.

2. Batch / folder processing

At the moment, Bibble Subs handles one file at a time.

That’s great for a single episode, less great when you’re staring at a folder containing an entire season.

Planned improvements include:

- point it at a folder → it processes everything

- automatic naming per file

- optional parallel processing

- optional “only process files without existing subtitles” mode

This turns it from a handy tool into a proper workflow.

3. Better cleanup and temporary file management

The app creates temporary files during processing.

They’re cleaned up, but I’d like to make the whole process more transparent:

- clearer logging

- optional

--keep-tempflag for debugging - better naming for intermediate files

Small things, but they make the tool feel more polished.

4. More control over subtitle formatting

ASS already opens the door to styling, but future versions may include:

- line‑length control

- automatic segment merging

- punctuation cleanup

- karaoke timing modes

- optional “minimalist” subtitle style

Nothing too fancy — just enough to make the output feel intentional.

5. Optional post‑processing with language models

Not rewriting the dialogue — just:

- fixing casing

- fixing punctuation

- removing filler words

- smoothing out Whisper quirks

Think of it as a light editorial pass, not a rewrite.